Building an AI prototype is one engineering problem. Running it for ten thousand requests a day, at a sane cost, with logs you can audit and a rollback you can trust — that is a different problem. We solve the second one.

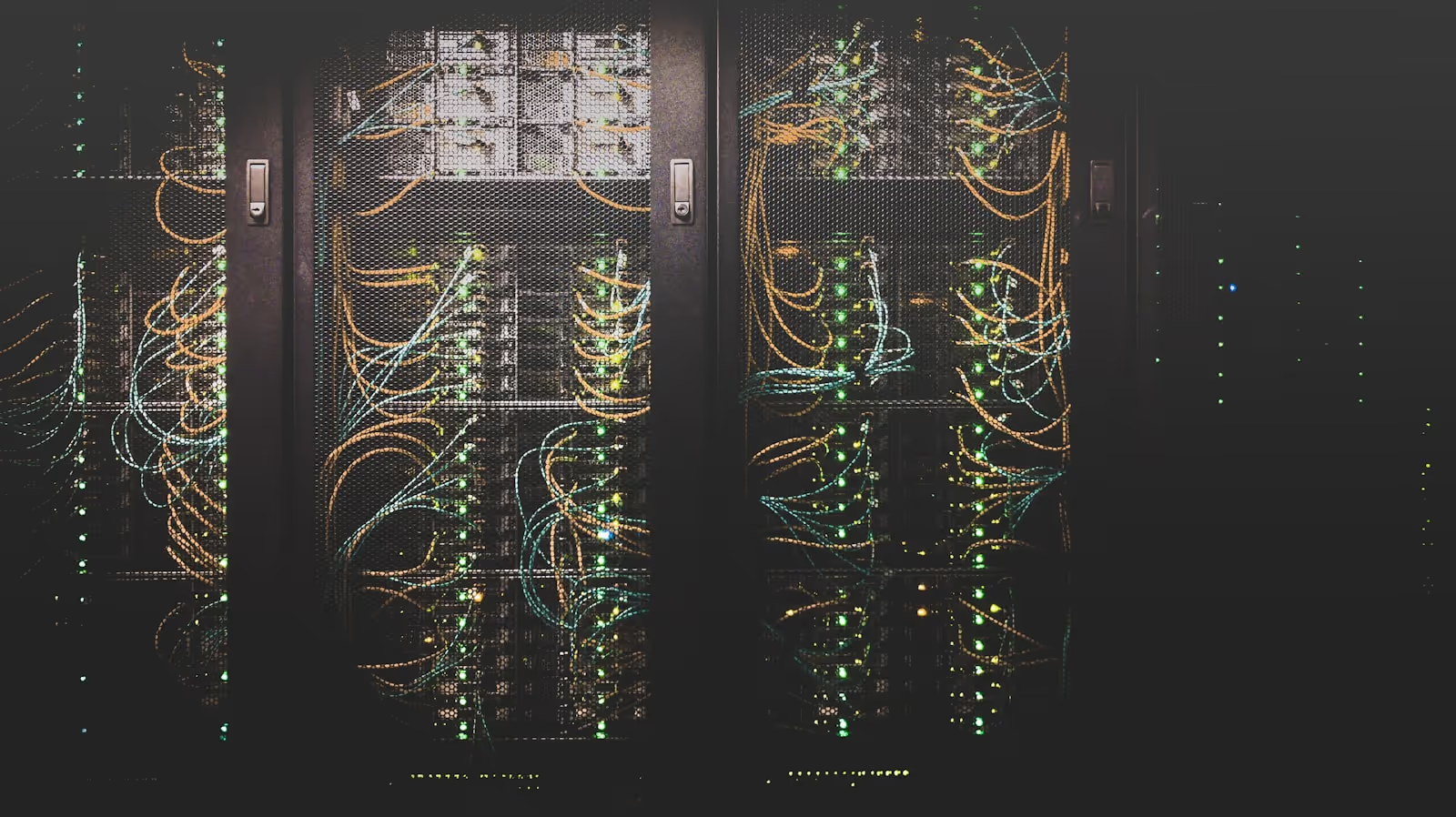

AI Deployment & Operations

Production deployment, scaling, monitoring, and cost controls for AI workloads on Cloudflare AI, AWS Bedrock, and GCP Vertex AI.

AI in production — without surprise bills or downtime

Most "AI deployment" advice comes from teams running on a credit card and a hope. That is fine for a prototype and ruinous at scale. We design AI deployments with the boring infrastructure questions answered first: what happens at 100x load, who gets paged when latency spikes, and how do we cap monthly spend before it triples.

Inference platforms

Cloudflare Workers AI for edge inference, AWS Bedrock or GCP Vertex AI for managed models, custom GPU deployments where it earns its weight.

Cost engineering

Monthly spend dashboards, per-tenant accounting, prompt-caching to reduce repeat charges, and hard-stop budgets that cut off runaway loops.

Observability

Latency, error-rate, and cost dashboards on Grafana or Datadog. Audit logs for every inference. Pages on regression, not just downtime.

Typical engagement

Take an existing prototype to production in 4 to 6 weeks. Or build production-grade infrastructure from scratch in 8 to 10 weeks.

Platforms

Cloudflare AI Workers, AWS Bedrock, GCP Vertex AI, Anthropic API, OpenAI API. Self-hosted on request (vLLM, Ollama).

Handoff

Runbooks, on-call playbook, deployment scripts, and 30 days of side-by-side support before we hand the system over.

Pricing range

Starting at ₱150,000 for prototype-to-production migration. Greenfield production deployments quoted per scope.

Reliability targets

99.9% uptime SLOs by default. Cost variance below ±15% month-over-month. Sub-2-second p95 inference latency for typical RAG workloads.